A free 30-minute strategy session is the usual entry point.

We'll walk through your funnel, identify where the leak is, which

business metric it maps to, and the tests I'd run first against it.

No obligation.

Let's find the three tests worth running on your funnel.

Turn A/B testing into a system that actually moves CAC and trial-to-paid.

Anyone can run an A/B test. Few move the needle. I run testing as a structured program tied to CAC and trial-to-paid – so every winner shows up in business metrics, not just on the page.

ConversionLab played a key role in accelerating Campaign Monitor's growth by optimizing our landing pages for maximum performance. We increased conversion rates by 260% in the first 6 months and 1187% cumulatively across the full engagement, and saw a 64% reduction in customer acquisition cost. We are very happy with the results!

“

Shamita Jayakumar

Senior Marketing Manager

TRUSTED BY SAAS TEAMS AT

- Find the friction

Heatmaps, session recordings, funnel data. The hypothesis comes from the data, not from a deck. - Design one isolated change

One variable, one control, one challenger. Success metric and kill criterion written before launch. - Run to significance, never early

95% is the default. Tests are not called early just because the line went up on a Friday. - Ship the winner, keep the loss

Winners become the next test's control. Losses shape the next hypothesis. The baseline only moves up.

Tests that move the business metric, not just the page metric.

CVR on a page is easy to lift in isolation. The discipline is connecting every test to a metric that shows up in your P&L – so what you ship in week three is still paying out in quarter four.

WHAT YOU WIN

CUSTOMER ACQUISITION – FOR B2B SAAS

© 2026 ConversionLab

Free · 30 minutes · No commitment

Free · 30 minutes · No commitment

ConversionLab is a Nordic CRO agency for B2B SaaS companies, serving clients across the Nordics, Europe, and North America. I run structured A/B testing on landing pages, trial flows, and pricing pages to increase conversion rates and reduce customer acquisition cost – without requiring changes to your product or ad spend.

RESOURCES

Prioritization starts with the business metric I'm trying to move – usually CAC on a paid funnel, or trial-to-paid on the signup flow. From there, the first test is almost always one of three categories: message match between ads and the landing page, CTA clarity, or form friction. These produce the largest CAC drop per dev hour because they're the friction most teams haven't tested yet.

The tool isn't the problem. Most stalled testing programs share the same three gaps: no prioritization framework, no link from CVR to a business metric like CAC, and no system for capturing learnings when tests lose. I bring the framework. You keep the tool – I work with Optimizely, VWO, Convert, AB Tasty, and Google Optimize replacements.

Until it reaches statistical significance, not until a fixed end date. As a rule of thumb, I want at least 1,000 conversions per variant and two full business cycles – usually two weeks minimum. Calling tests early is the most common mistake in CRO and the fastest way to produce wins that don't replicate when you scale ad spend.

95% as the default. 90% for directional tests where the cost of being wrong is low. 99% for pricing or revenue-critical changes where the cost of being wrong is high. The threshold is a decision per test, not a fixed constant.

It's reported as inconclusive. No winner pill, no claim, no story. Calling a flat test a win is the fastest way to lose trust with the data – and the team relying on it.

Around 5,000–10,000 monthly visitors to the page being tested is a comfortable starting point – roughly 1,000 conversions per variant per month to reach statistical significance on meaningful tests. Lower traffic isn't a dealbreaker – it just shifts the strategy toward fewer, bolder tests rather than many small iterations.

Frequently asked questions

Lower CAC from the ad spend you already have

Every winning landing-page test means more trials and demos from the same paid traffic. The ad budget stays flat, the cost per acquisition drops. Campaign Monitor cut CAC by 64% across the engagement.

Stronger trial-to-paid without redesigning the product

Onboarding, signup flows, pricing pages. The same testing discipline applies inside the funnel – and the lift compounds into expansion revenue. BetterWorld's signup page alone moved 204% from a single test.

Every change defensible in a board meeting

No juniors, no account managers, no handoffs. The person who writes the hypothesis is the person who runs the test – and the one you can actually talk to.

A repeatable loop, run every time.

Same four steps on every test, every client, every page. Each cycle ties back to one business metric – CAC, trial-to-paid, expansion – so winners don't just lift CVR, they show up in the P&L.

HOW IT WORKS

One hypothesis · One result

Not every test wins. That's how you know the bar is high enough.

HONEST ABOUT LOSSES

One test, 2.5× more trials from the same ad spend.

Campaign Monitor's automation ads sent traffic to a page leading with a generic email-marketing headline. The challenger restated the ad's promise word-for-word above the fold. Same traffic, same offer, same form – only the message match changed. Doubled the conversion rate, and CAC dropped accordingly.

REAL TEST · REAL NUMBERS

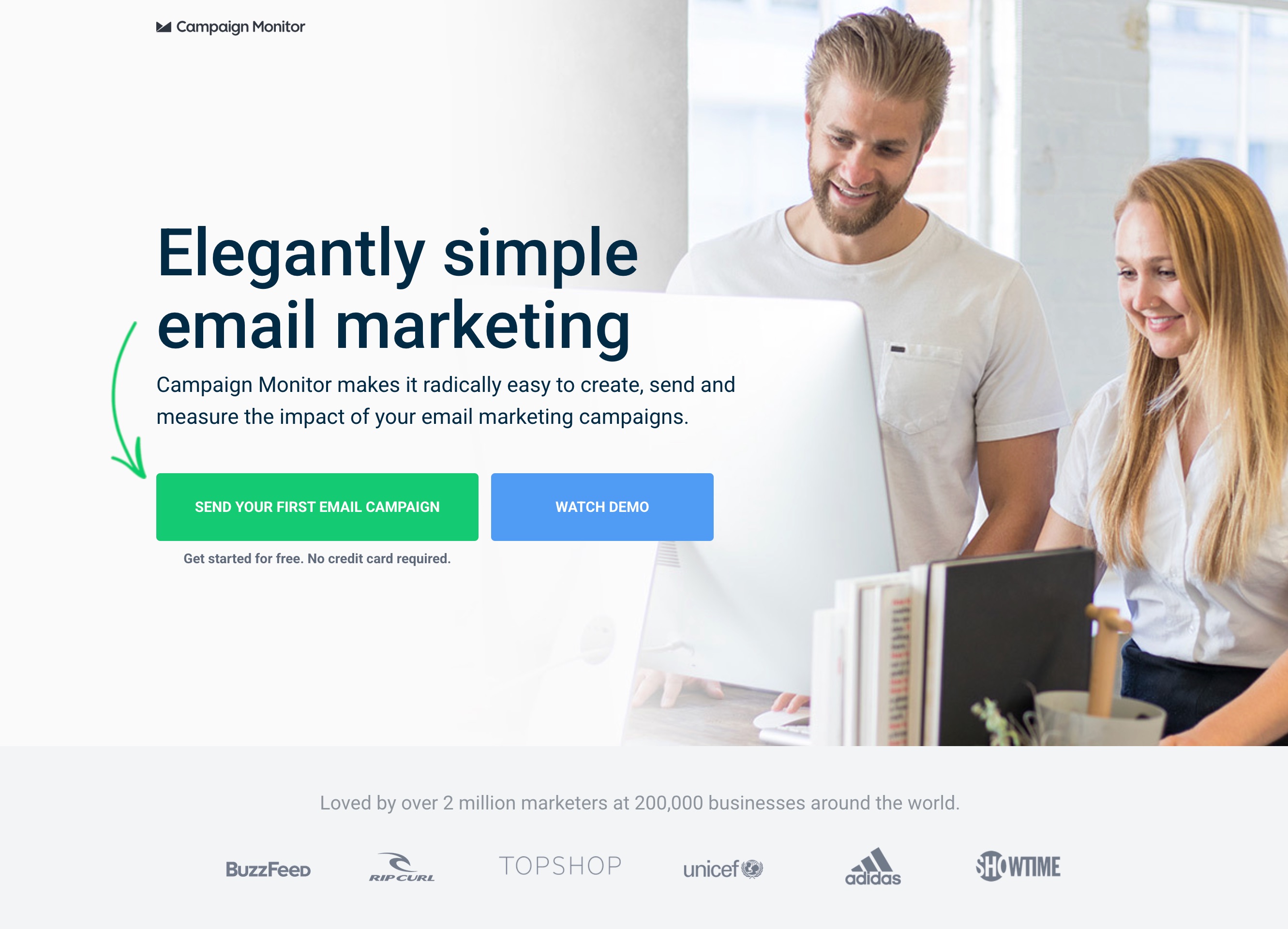

Elegantly simple email marketing

Generic value proposition with no direct match to the automation ad copy driving traffic.

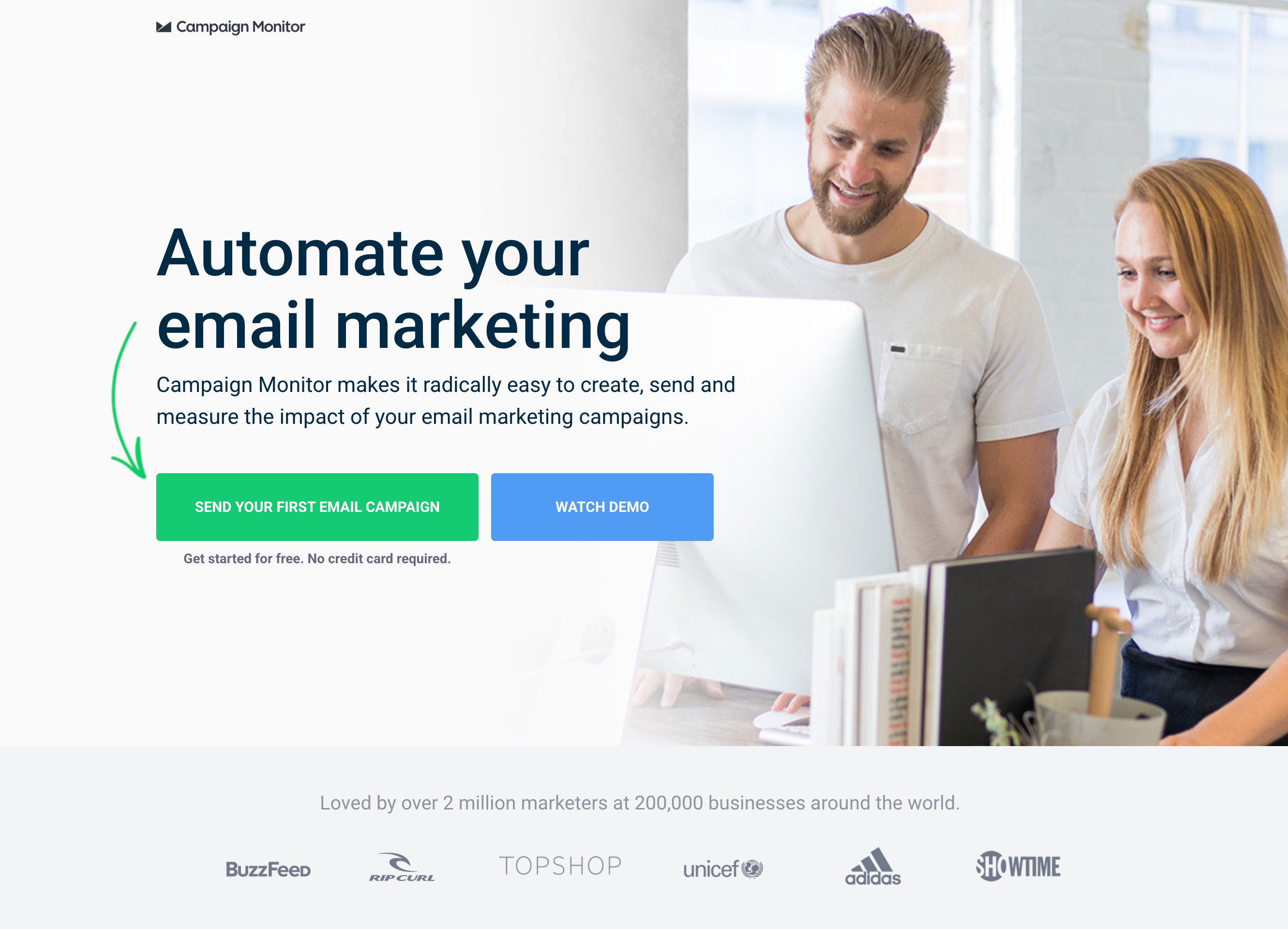

Automate your email marketing

Headline matches the ad's automation promise. Visitor's mental thread from ad to page stays unbroken.

A real test program produces losses. If 100% of tests win, the hypotheses are too easy – you're optimizing things that were never the friction.

Losses are reported in full. Inconclusive tests stay inconclusive. The next hypothesis is built on the truth, not a story.

Outcomes across the test library

-64%

CAC REDUCTION

Same ad spend, far more paying customers – Campaign Monitor

+1187%

TOTAL CVR UPLIFT

Cumulative across 33 tests over the engagement – Campaign Monitor

+204%

TRIAL SIGNUPS

From one layout simplification test on the signup page – BetterWorld